(from github.com/jhult)

I would like to search on a term such as “Networking” and not have Fess stem. So, this search term would not return “Networks” or “Network”.

I have added “Networking” to en/protwords.txt.

However, if I search for “Networking” or “Networks” or “Network”, I always get the same results.

I have deleted all documents and re-crawled. I have also tried closing the index and re-opening it.

I may have this configured wrong. Any assistance would be appreciated.

(from github.com/jhult)

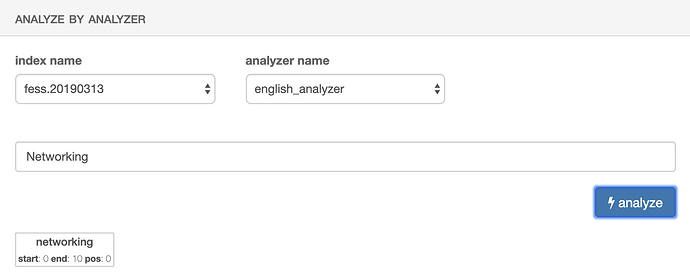

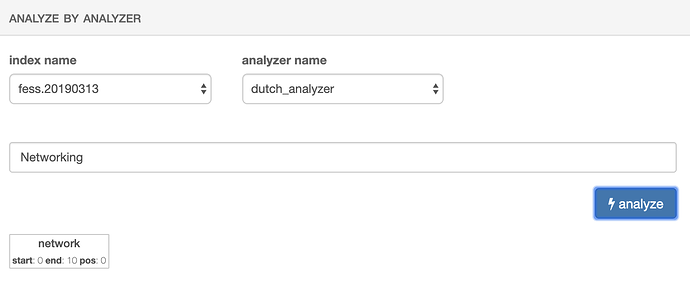

If I switch to a different language, it shows it stemming.

English looks correct in the dashboard but searches don’t seem to work.

(from github.com/marevol)

english_analyzer uses possessive_english.

To change it, you need to modify fess.json and recreate fess index.

(from github.com/jhult)

The steps I took to re-create the Fess index, are as follows based upon this:

- Delete fess.yyyymmdd index on dashboard

- Stop Fess

- Start Fess

- A new fess.yyyymmdd index is created automatically

(from github.com/jhult)

I edited /usr/share/fess/app/WEB-INF/classes/fess_indices/fess.json. It was originally:

"english_analyzer": {

"type": "custom",

"tokenizer": "standard",

"filter": [

"truncate20_filter",

"lowercase",

"english_keywords",

"english_override",

"possessive_stemmer_en_filter"

]

},

I removed this line: possessive_stemmer_en_filter

I then re-recreated the Fess index (based on the above steps). However, it is still not working…

Any other ideas?

(from github.com/jhult)

/var/lib/elasticsearch/config/en/protwords.txt contains “Networking”

(from jhult (Jonathan Hult) · GitHub)

I suppose I should mention I am running a search as follows:

/search/?num=10&sort=&q=“Networking”

(from github.com/marevol)

Fess is a hybrid index with standard_analyzer and language_analyzer.

So, you need to also check standard_analyzer.